ChatGPT for self-reflection: does it really help?

Yes, it can help. But not because it "understands you" like a coach.

ChatGPT tends to help when you use it as a structure tool, it slows you down, asks questions, summarizes what you said, and helps you test alternative interpretations. In research on ChatGPT used for mental-health-style support, people report benefits like psychoeducation, emotional support, goal setting, self-monitoring, and CBT-style exercises.

At the same time, the tradeoff is clear, large language models can be helpful and still be unreliable. They can generate plausible but wrong content and present it with confidence, which matters in health-adjacent contexts.

A useful framing is:

- ChatGPT can support reflection as a mirror and a notebook.

- It should not be treated as an authority on what is true, what you "have," or what you should do.

What studies suggest so far

There are not many long, high-quality clinical trials specifically testing "ChatGPT for self-reflection" as a product category. But we do have relevant evidence from adjacent areas.

1) Qualitative evidence: it can provide useful support patterns

A 2024 qualitative study analyzing ChatGPT for mental health support reported "positive factor" categories such as emotional support, goal setting and motivation, self-assessment and monitoring, CBT elements, and guided exercises. It also reported "negative factor" categories including ethical and legal issues, accuracy and reliability problems, limited assessment capability, and cultural or linguistic limitations.

That is close to the real-world experience of using ChatGPT for reflection, it can be useful, and it can fail in predictable ways.

2) Early journaling research: LLM-based journaling can improve self-reported outcomes (small studies)

An 8-week exploratory study of an LLM-driven journaling system reported improvements in affect and reductions in negative affect, loneliness, and anxiety and depression measures, plus small improvements in mindfulness and self-reflection.

It’s promising, but it’s also small and not the same thing as "ChatGPT used in the wild."

3) Stronger evidence for chatbots generally (not necessarily LLM ChatGPT)

A 2025 randomized controlled trial of topic-based chatbots found improvements in self-care outcomes and mental health measures versus a waitlist group, and noted how quickly the technology landscape shifted between planning and publication.

This supports the idea that structured, chat-based self-care can work. It does not prove that an open-ended LLM is always safe or accurate in every situation.

The biggest limitation: it depends on the user (a lot)

ChatGPT is not a fixed intervention. It’s a general model that adapts to the prompt.

That means outcomes depend heavily on:

- what you ask

- how honest and specific you are

- whether you can separate facts from interpretation

- whether you notice when it’s guessing

- whether you follow through after the conversation

Two people can use the same tool and get opposite results. One gets clarity and action. Another gets rumination with better wording.

The known failure modes (what to watch out for)

Common failure modes appear across studies and real use. Be aware of these when you use ChatGPT for reflection.

1) Overconfidence and hallucinations

LLMs can sound certain while being wrong. In health and science contexts, they can also produce misleading content or amplify misinformation.

In self-reflection, this can show up as:

- "diagnosis-ish" language you didn’t ask for

- mind-reading, "they did this because…"

- false precision, "this means you have…"

- advice that skips your real context

2) Limited assessment: it can’t truly "see" you

ChatGPT doesn’t observe tone, body language, relationships, history, or risk signals the way a human coach or clinician can. Research often describes this as limited assessment capability.

3) Privacy: consumer chatbots are not therapy rooms

If you use ChatGPT as your private journal, you should understand the data model behind it.

OpenAI describes privacy controls that can limit the use of your content for training, and it also explains that certain business offerings, such as enterprise-style products and the API, are not used for training by default.

But the reflection takeaway is simple, don’t assume a consumer chatbot is the same as a private coaching space.

If something is medical, deeply sensitive, or legally risky, treat privacy as a first-class requirement, not an afterthought.

When ChatGPT is a good fit (and when it isn’t)

Good fit:

- daily check-ins, what happened, what did I feel, what do I try next

- clarifying a messy situation into bullet points

- generating alternative interpretations, CBT-style reframing

- turning goals into small experiments

- writing follow-up questions for tomorrow

Not a good fit:

- crisis situations

- anything requiring diagnosis or treatment decisions

- situations where you tend to outsource judgment to the tool

- topics where privacy must be strict

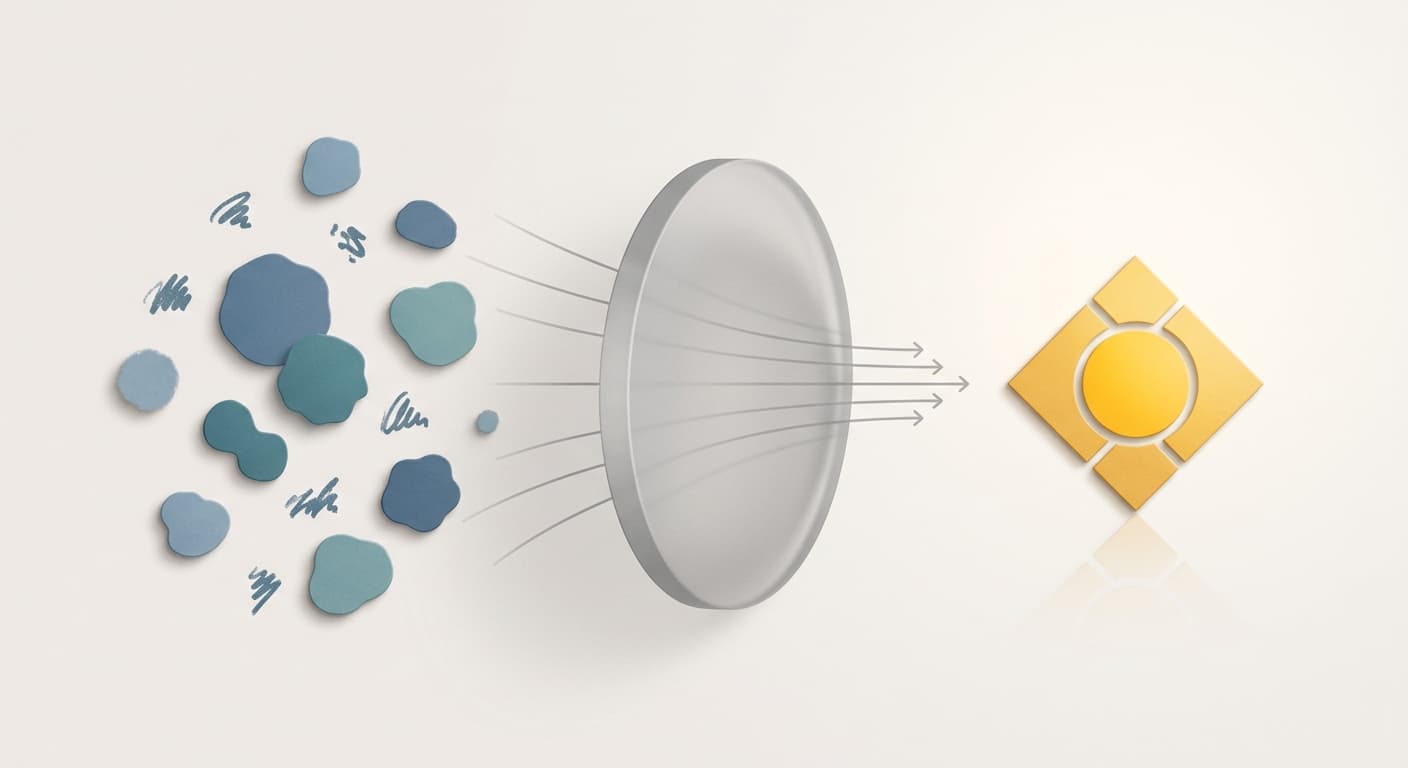

Why Mendro is different (and why it matters)

ChatGPT is a general tool. Mendro is built as a self-reflection product.

Here’s what changes when reflection is designed intentionally.

1) Mendro gives you a real mental space, not just chat

In ChatGPT, the default experience is dialogue. Mendro is built for reflection workflows. You can capture insights, organize themes, create small tools, and set reminders inside the same personal space, so reflection turns into behavior, not just text.

2) Built on coaching psychology (not generic conversation)

General models try to be helpful for everyone. Mendro is designed around coaching structure, clearer questions, better pacing, less random advice, and more focus on what you actually need next.

3) Privacy by design

For many people, self-reflection becomes meaningful only when it feels private. With consumer AI tools, you often have to actively manage settings and still shouldn’t treat the experience like a confidential clinic room.

Mendro’s goal is to make your reflection space feel like it belongs to you, because in real coaching, psychological safety isn’t optional.

A safer way to use ChatGPT for self-reflection (if you still want to)

Use this rule, ask for process, not truth.

You can paste this once:

Act like a coaching-style reflection guide. Ask one question at a time. No diagnosis. Don’t assume missing facts. Label guesses as guesses. Help me separate facts, feelings, needs, thoughts, and next actions.

Then keep the session simple:

- facts, what happened

- feelings, what did I feel

- meaning, what story am I telling

- options, what else could be true

- experiment, one small next step

- follow-up, when will I check in

Bottom line

A balanced read of the early evidence looks like this:

- ChatGPT can help with structure, reflection prompts, and CBT-style reframing, and early studies on AI journaling are promising.

- It also has real limitations, accuracy issues, overconfidence, limited assessment, and privacy concerns. Results depend heavily on the user.

- Mendro improves the experience by designing self-reflection as a coaching-psychology workflow inside a personal mental space, not a generic chat window.