What prompts do

Most people try AI prompts for reflection the way they try a new notebook. They hope the tool will make them honest, consistent, and insightful. That usually does not happen.

A good prompt does something quieter and more useful. It provides a steady structure your mind can step into when you are stressed, distracted, or stuck. That steady structure is what turns vague experience into things you can name, sort, and choose. In other words, prompts do not produce wisdom, they make reflection usable.

The practical question becomes simple, if you want reflection that actually helps, what should your prompts do?

If you want to go from theory to practice quickly, it helps to use a dedicated reflection space that turns your answers into a coherent entry you can revisit later. In Mendro, you can try these prompt patterns directly, and your responses become a personal journal you can continue from in later sessions. The approach is rooted in coaching psychology, structured questions, gentle perspective shifts, and small next-step experiments rather than abstract advice. Because your reflections are built from your own words and context, the result stays individual to you instead of generic.

Why structure helps

Reflection gets slippery when your brain tries to do several jobs at once, feel something, interpret it, defend against it, and decide what to do next. When those jobs blur, you end up ruminating, looping, or journaling without progress.

Structure helps by separating those jobs into steps. When you move from concrete observation to interpretation to action, you reduce ambiguity and reveal real decision points. That is the core mechanism, sequence reduces confusion, and clear categories reduce guessing. Different moments need different prompt shapes, for example reflection-in-action versus reflection-on-action. Matching the prompt to the moment keeps the work doable.

Design spec

Strong reflection prompts share a few practical traits, they fit the situation, arrive at the right time, follow a helpful sequence, and let you work at multiple depths rather than forcing a single leap.

A useful prompt should do at least three of these:

- Anchor in specifics.

- Create a clear next step.

- Widen perspective without invalidating your view.

- Keep you as the decision-maker.

Avoid two common failure modes:

- Leading you to a conclusion it invented.

- Letting you produce convincing noise that looks like insight.

Use those constraints as a north star when you design prompts. The examples below follow this spec.

Five prompt patterns

You do not need dozens of prompts. Learn a few patterns you can reuse. Think of each pattern as a mode of reflection for different moments.

Pattern 1: The concrete scan

Use this when you feel foggy, flooded, or generally off and cannot tell why. The goal is to reduce ambiguity by collecting observable inputs.

Prompt: "Act as a neutral reflection partner. Ask me seven short questions to map what is happening right now. Keep them concrete: body sensations, emotions, recent events, and immediate constraints. Ask one question at a time and wait for my answer before the next."

Follow-up: "Summarize what I said into facts, feelings, needs, and open questions. Keep it grounded and do not add interpretation."

Why it works, converting a vague state into categories makes thinking possible again.

Where it breaks, this pattern maps, it does not diagnose. It is not for finding a single root cause too early.

Pattern 2: The timeline replay

Use this when something went sideways and your mind keeps replaying it. The mechanism is sequencing, which reduces hindsight bias and exposes decision points.

Prompt: "Help me replay an event as a timeline. Ask me to describe what happened first, what I noticed, what I assumed, what I did, and what happened next. After the timeline, help me identify two moments where a different choice was possible."

Follow-up: "For each moment, give three alternative actions: one minimal, one assertive, one experimental. Then ask which I would choose next time and why."

Why it works, it separates observation, assumption, and action, which is where learning lives.

Where it breaks, if you use it to prosecute yourself. Keep the tone curious, not punishing.

Pattern 3: The competing stories check

Use this when you are stuck in one interpretation and cannot tell if it is true or just loud. The mechanism is perspective shift, creating alternative hypotheses you can test.

Prompt: "I will tell you my current story about a situation. Your job is to generate three alternative stories that are plausible and compassionate, without dismissing mine. For each story, list evidence that would support it and evidence that would weaken it. Then ask me which evidence I actually have."

Follow-up: "Now help me write a one-sentence current best explanation that includes uncertainty."

Why it works, it turns narrative certainty into testable claims.

Where it breaks, if you treat the alternatives as equally true. The goal is openness, not relativism.

Pattern 4: The values to action bridge

Use this when you know how you feel but not what to do. The mechanism is converting abstract values into concrete constraints that change your next choice.

Prompt: "Help me make a decision using values. Ask me: what outcome I want, what I am avoiding, which value I want to honor, and what trade-off I am willing to accept. Then propose three next actions that fit my value, each with a likely downside."

Follow-up: "Ask me to choose one action and write a two-sentence commitment: what I will do and what I will not do."

Why it works, it keeps the decision human, with you choosing trade-offs.

Where it breaks, if you let the AI pick your value for you. Values are yours, not inputs the model should assume.

Pattern 5: The pattern spotter

Use this when you have many entries or recurring situations but no synthesis. The mechanism is aggregation, which reveals repetition your working memory cannot hold.

Prompt: "I will paste ten short journal entries. Extract recurring themes, triggers, and needs. Then reflect them back as: (a) my top three recurring situations, (b) my default coping response, (c) the cost of that response, (d) one small experiment to test a different response. Only use what is in the text."

Follow-up: "Ask me five questions to confirm or correct your pattern summary. Do not assume you are right."

Why it works, it turns raw text into testable hypotheses and forces validation.

Where it breaks, do not paste sensitive information into a system you do not trust. Pattern spotting is powerful but requires privacy care.

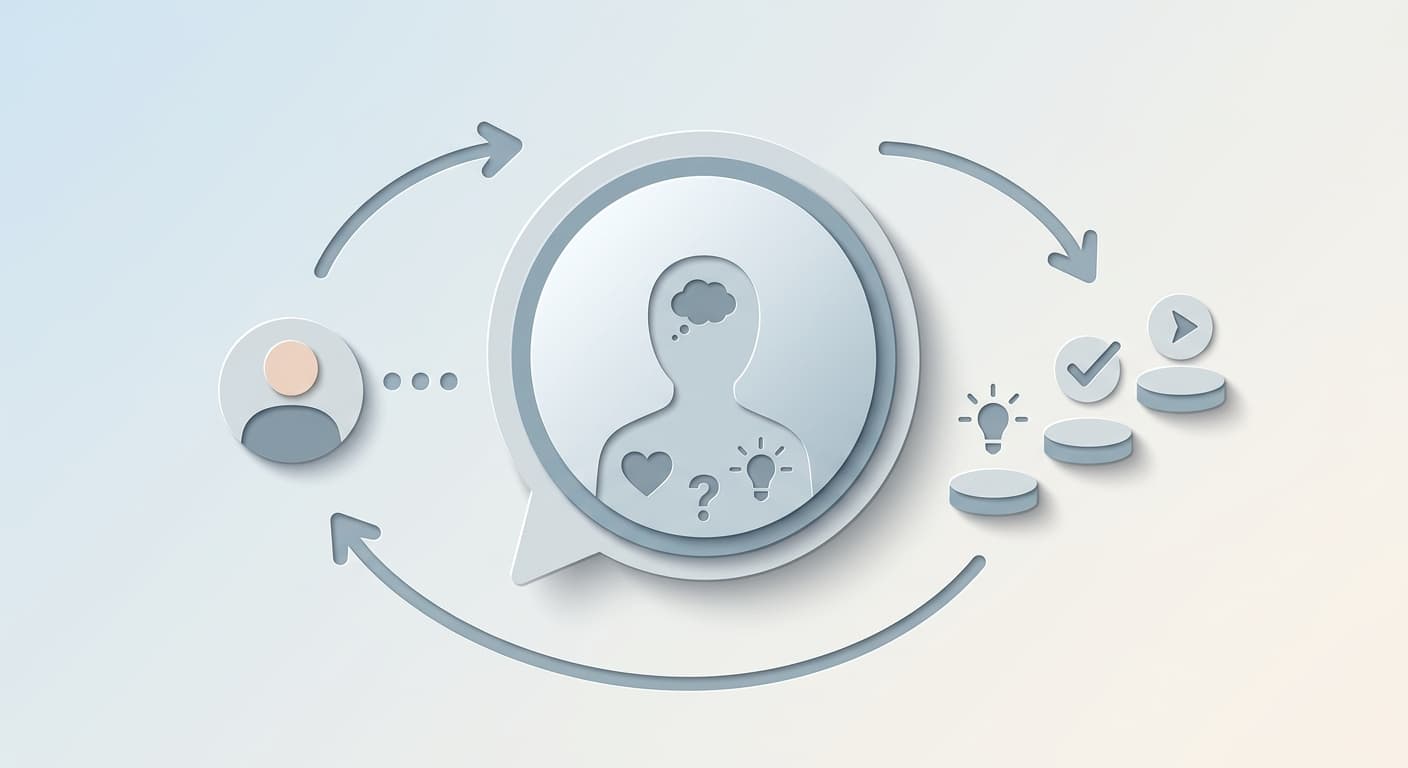

Keep reflection user-led

AI is very good at producing plausible interpretations. That is also the danger. Design prompts so the model must earn its inferences and keep you in control.

Two practical moves work well. First, require questions before conclusions. For example: "Before you summarize or suggest anything, ask me three clarifying questions. If you are uncertain, say what you are uncertain about."

Second, separate what I said from what you infer. For example: "Return two sections: direct quotes or close paraphrases of my points, and your inferences stated as hypotheses with confidence levels: low, medium, or high."

These rules reduce false clarity, the feeling of insight that is actually just coherence.

Timing and context

Reflection feels different depending on timing and stress. A helpful prompt on a calm Sunday may feel impossible during a deadline or an argument. Make prompts sensitive to context and energy.

If you are low energy, ask for the smallest useful step: "Give me the smallest possible reflection. Ask one question I can answer in 30 seconds. Then offer two options: stop, or go one layer deeper."

If you are high intensity, keep things grounded: "Ask what is urgent, what is important, and what can wait 24 hours. Then ask what support I need to do the urgent part."

The aim is to reduce friction so the reflection actually happens.

Dos and donts

Do ask for sequential steps and concrete examples. Do ask the model to mirror your words before interpreting. Do treat insights as hypotheses, not final verdicts.

Do not ask "What is wrong with me?" Do not outsource moral judgment. Do not paste identifiable data into tools you do not trust. Do not confuse eloquent summaries with truth.

Also be cautious with marketing claims about large clarity improvements. Verify study details before trusting big numbers.

A reusable prompt

If you want one default prompt that works across many situations, use this.

"Be my reflection partner. Your job is to help me think, not to think for me.

Process:

- Ask me three clarifying questions, one at a time.

- Mirror what I said in four buckets: facts, feelings, needs, choices.

- Offer two to four hypotheses about what might be going on. Mark each as low, medium, or high confidence.

- Propose three next-step experiments, each small enough to do in 15 minutes or less.

- End by asking what I want to keep, change, or ignore from your output.

Constraints:

- Do not give medical or legal advice.

- Do not assume you know my values, ask.

- Use plain language. Keep it grounded."

This is not a magic formula. It is a repeatable structure that slows the mind down, separates functions, and returns agency to you.

When prompts help

AI prompts are most useful when you are overloaded, when you need a steady sequence, or when you want pattern detection across lots of text. They are less useful for deep emotional processing, relational repair, or high-stakes decisions that need domain expertise.

Treat the model as a structured mirror. You bring the lived experience, it brings the frame. That division of labor is what turns AI prompts into reflection that actually produces clarity.